ABSTRAT

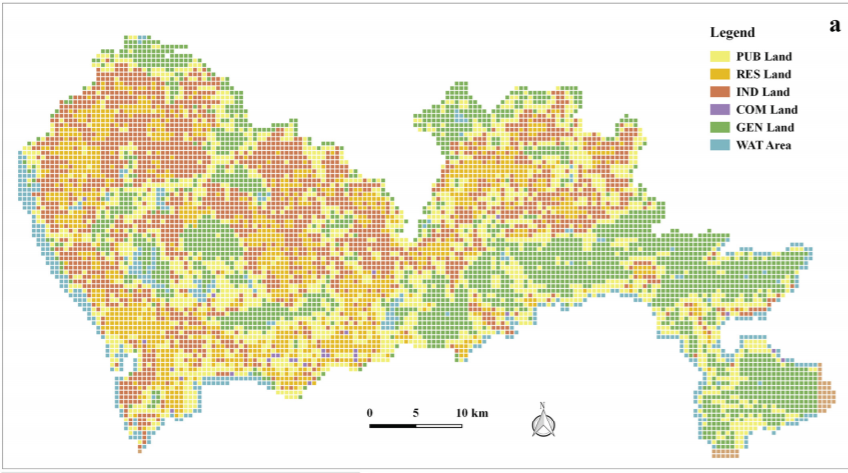

The significance of urban function recognition has stimulated the need for multi-source geospatial data fusion, especially the fusion between remote sensing images and spatiotemporal big data. In previous studies, the natural correspondence across multi-source geospatial data has often been ignored in the description of one object, which would influence the performance of data fusion. Therefore, this study introduces the cross-correlations mechanism to achieve the natural correspondence by taking remote sensing images, point of interest (POI), and real-time social media users as an example. It proposes a new cross-correlations based functional urban land use (CC-FLU) model to infer urban functions. The presented model extracts physical and human semantic features from multi-source geospatial data, then maps them to their common subspaces to obtain their cross-correlations respectively. These semantic features and their cross-correlations are integrated together to classify urban functions. An experiment in Shenzhen, China was implemented to evaluate the performance of the presented model at a fine scale. The results show that the proposed CC-FLU model achieved a better performance than previous methods, yielding OA and Kappa values of 0.851 and 0.812, respectively. The results of the presented approach outperform those of methods using one single source geospatial data. The results demonstrate that the skilled information of each type of geospatial data is fully melted into the data fusion model, and simultaneously achieves the natural correspondence across multi-source geospatial data. Moreover, this study resolves the possible disadvantages of models using one single source data and fusion methods by sequentially concatenating multi-source features. The results will benefit urban planners and urban policy-makers.

Q.E.D.